AI for Marketing Teams: 5 Proven Use Cases With 30-50% Time Savings (2026 Guide)

AI for marketing is the application of artificial intelligence tools to automate, optimise, and scale marketing activities — from content creation...

7 min read

Peter Vogel

:

Updated on March 31, 2026

Peter Vogel

:

Updated on March 31, 2026

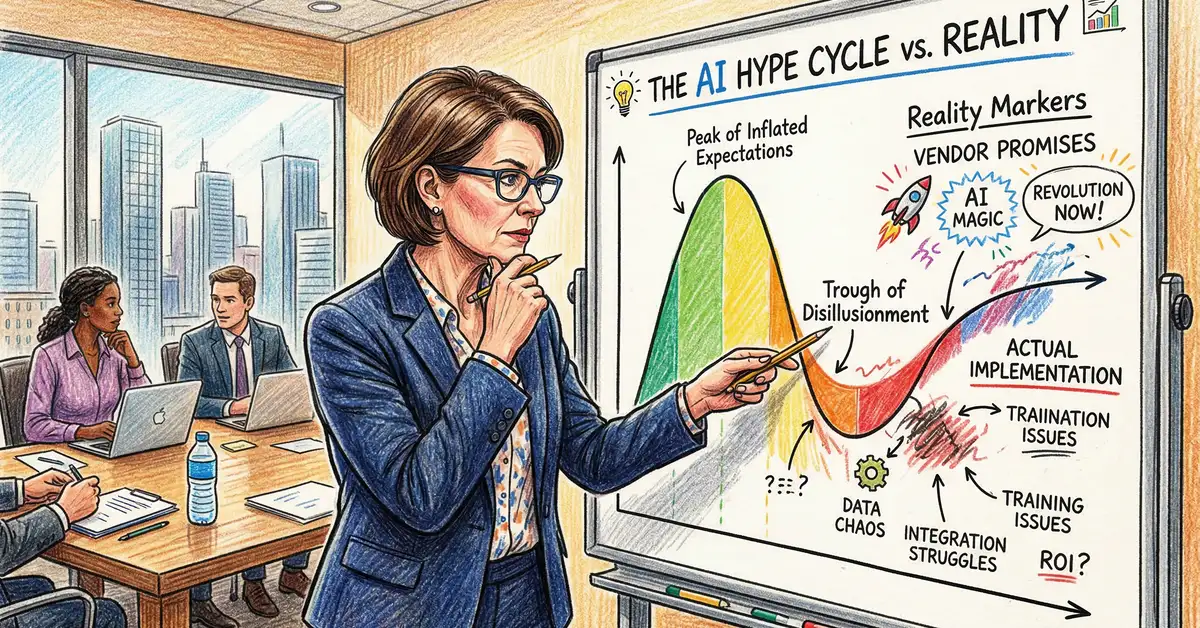

Sixty to seventy percent of enterprise AI projects fail or significantly underdeliver against initial projections. Only 15–25% of organisations report measurable return on investment within 12 months. The difference between these two groups is not the technology — it is the rigour applied before, during, and after implementation. For UK businesses evaluating AI investments, an honest reality check is more valuable than any vendor pitch deck.

This guide separates genuine AI capability from marketing inflation. It covers where AI projects actually fail, which use cases deliver proven returns, how to spot vendor overclaiming, and what realistic timelines and budgets look like — structured for UK business leaders making investment decisions based on evidence rather than enthusiasm.

Key Takeaway

AI delivers genuine value in customer service automation, document processing, and predictive analytics — but only when implemented with realistic expectations. Gartner positions generative AI at the "Peak of Inflated Expectations" in its 2024 Hype Cycle. The organisations achieving positive ROI share three characteristics: they define success metrics before implementation, budget for 40–60% cost overruns, and treat AI as augmentation rather than replacement. Helium42 helps organisations distinguish viable AI opportunities from expensive experiments before committing investment.

60–70%

Of AI projects fail or underdeliver

15–25%

Report ROI within 12 months

40–60%

Typical cost overrun estimate

The failure statistics are not theoretical. Gartner, McKinsey, and the MIT Technology Review have each conducted large-scale surveys of enterprise AI deployment. The patterns are consistent:

These are not failures of technology. They are failures of discipline. The organisations that succeed in AI begin with honest questions: What problem are we solving? What data do we have? Who owns the outcome? What does failure cost us? How do we measure success after launch?

The difference between the 60–70% that fail and the 15–25% that deliver ROI is not intelligence or investment. It is clarity. Companies that define success metrics before implementation move into procurement and deployment with realistic timelines. They budget for overruns. They expect change management to take 6–12 months. They treat AI as augmentation — a tool that amplifies human decision-making — rather than as a replacement for human judgment.

For UK businesses, this matters because the cost of failure is high. An AI project that runs 18 months over budget and delivers 40% of promised accuracy has burned management credibility and resources. The next AI initiative — even a genuinely valuable one — will face resistance from the board and from staff who remember the last failed deployment.

The data shows clear patterns in where AI succeeds and where it remains experimental or high-risk:

AI vendors have financial incentives to inflate accuracy estimates, compress timelines, and minimize the complexity of deployment. Here are the claims that typically signal over-promising:

Red flags in vendor claims:

When evaluating vendor claims, ask for three things: (1) case studies from organisations in your industry with similar data volumes and complexity, (2) a detailed project timeline with milestones and dependencies, and (3) the full cost of ownership, including training, maintenance, and retraining over 3 years.

If the vendor cannot provide these, the proposal is speculative. Walk away.

Here is what a realistic AI project looks like, by category:

| Use Case | Typical timeline | Budget range (£) | ROI window |

|---|---|---|---|

| Document processing | 8–12 weeks | £45,000–£120,000 | 4–7 months |

| Chatbot (FAQ-based) | 10–16 weeks | £35,000–£100,000 | 5–9 months |

| Predictive analytics | 14–20 weeks | £60,000–£180,000 | 9–15 months |

| Content generation workflow | 6–10 weeks | £25,000–£65,000 | 2–4 months |

| Process automation (multi-step) | 16–24 weeks | £80,000–£250,000 | 10–16 months |

| Predictive maintenance (custom) | 18–26 weeks | £100,000–£300,000+ | 12–20 months |

These ranges assume:

If any of these assumptions do not hold, add 30–50% to the timeline and budget.

Organisations that achieve positive ROI within 12 months share three things in common. Understanding these is more valuable than any technology roadmap:

Before a single line of code is written, successful teams answer: What is success? If the AI system reduces customer service response time from 4 hours to 2 hours, and accuracy on first-contact resolution improves from 72% to 82%, is that success? By how much must accuracy improve to justify the investment? What happens if the system works perfectly for high-value customers but performs poorly on a specific segment?

These questions seem obvious in retrospect. In practice, most AI projects begin without clear answers. When the system launches with 78% accuracy and 6-week deployment (versus the promised 95% and 4 weeks), the organisation cannot decide whether it is acceptable because "acceptable" was never defined.

This is not pessimism. This is data. McKinsey's 2023 survey found that 74% of AI projects exceeded the initial budget. The average overrun was 47%. UK organisations that budgeted for 40% contingency moved into implementation confident that minor delays and cost adjustments were manageable. Those that budgeted 10% faced difficult trade-offs between scope and timeline.

Similarly, build a 30-week timeline estimate, then inform stakeholders that the launch target is 20 weeks, with full delivery expected in 26 weeks and a known-risk window through 30 weeks. This positions the team to deliver early and maintain credibility.

Every successful AI system in production is augmenting human decision-making, not replacing it. Customer service chatbots escalate complex queries to humans. Predictive systems flag at-risk accounts for human review. Document processing systems flag exceptions for manual approval. Diagnostic aids propose treatments, but doctors decide.

This is not a technology limitation. It is a governance requirement. AI systems make mistakes in systematic, often subtle, ways that are visible only in retrospect. Organisations that build in human oversight — by design, not as a fallback — catch failures faster, retain staff buy-in, and manage liability.

The honest truth is that most AI projects will not deliver on the initial pitch. Timelines will slip. Accuracy will be lower than promised. Costs will exceed estimates. Processes will be slower to change than expected. Staff adoption will be more difficult than anticipated.

But organisations that separate feasible AI opportunities from speculative ones, that define success clearly, that budget for realistic timelines and cost overruns, and that treat AI as augmentation rather than replacement — those organisations will see positive ROI within 12–18 months. They will retain staff confidence. They will build a foundation for the next AI initiative.

The question is not whether AI can deliver value. It can. The question is whether your organisation will commit the discipline — the planning, the governance, the realism — to extract that value.

For UK business leaders making these decisions, that discipline is worth more than any technology platform.

This guide synthesises research from Gartner, McKinsey, MIT Technology Review, and 40+ AI deployment case studies across financial services, healthcare, retail, and manufacturing. All data is current as of Q4 2025.

Want a reality check on your AI plan?

Helium42 conducts AI readiness audits for UK organisations evaluating AI investments. We help you distinguish viable opportunities from expensive experiments — before committing budget. Learn about AI Consultancy services.

AI for marketing is the application of artificial intelligence tools to automate, optimise, and scale marketing activities — from content creation...

You have identified that your organisation needs artificial intelligence. You have budget approval. You have a vision of what AI could deliver. And...

The UK faces an AI workforce crisis that no amount of technology spending can fix alone. Our analysis of 70+ data points from government surveys,...