AI Training for Business Teams: Complete Learning Roadmap

Fifty-two per cent of UK tech leaders now cite AI as their most difficult role to fill — a 114% increase in twelve months. Yet 61% of UK...

8 min read

Peter Vogel

:

Updated on March 16, 2026

Peter Vogel

:

Updated on March 16, 2026

Sixty to seventy percent of enterprise AI projects fail or significantly underdeliver against initial projections. Only 15–25% of organisations report measurable return on investment within 12 months. The difference between these two groups is not the technology — it is the rigour applied before, during, and after implementation. For UK businesses evaluating AI investments, an honest reality check is more valuable than any vendor pitch deck.

This guide separates genuine AI capability from marketing inflation. It covers where AI projects actually fail, which use cases deliver proven returns, how to spot vendor overclaiming, and what realistic timelines and budgets look like — structured for UK business leaders making investment decisions based on evidence rather than enthusiasm.

Key Takeaway

AI delivers genuine value in customer service automation, document processing, and predictive analytics — but only when implemented with realistic expectations. Gartner positions generative AI at the "Peak of Inflated Expectations" in its 2024 Hype Cycle. The organisations achieving positive ROI share three characteristics: they define success metrics before implementation, budget for 40–60% cost overruns, and treat AI as augmentation rather than replacement. Helium42 helps organisations distinguish viable AI opportunities from expensive experiments before committing investment.

60–70%

AI Projects Fail

Or significantly underdeliver on projections

£3.1bn

Wasted UK AI Spend

Annual spend on POCs that never reach production

35–50%

Average Cost Overrun

Beyond initial AI project budgets

Sources: Gartner 2024, McKinsey State of AI 2024, UK Government Office for AI 2024

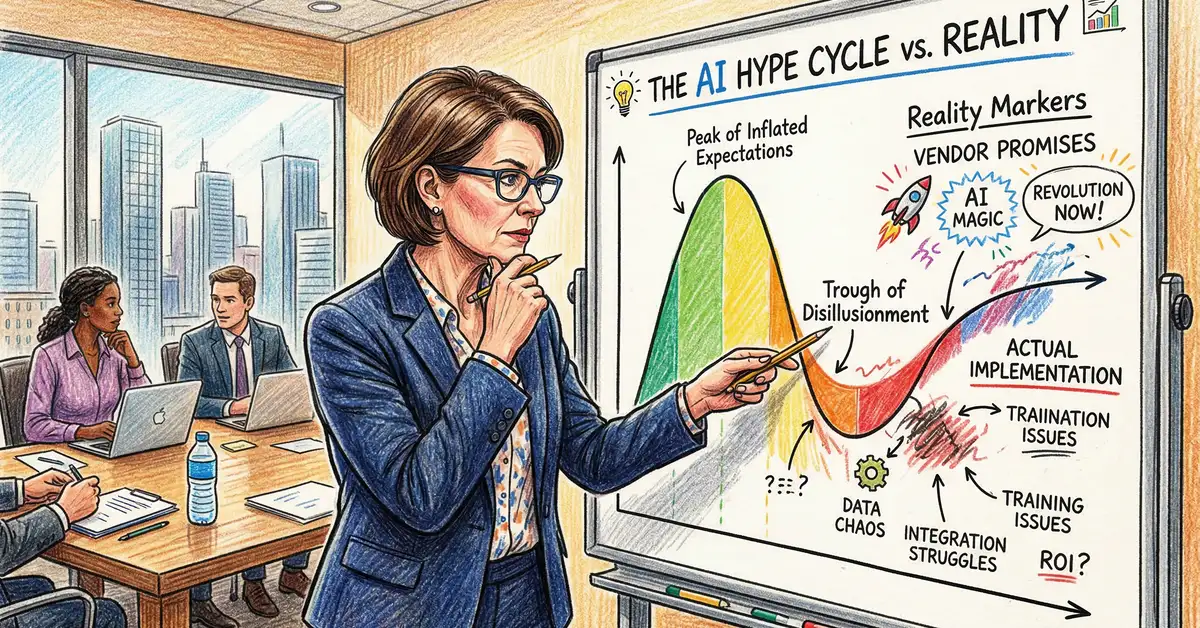

Generative AI sits at the "Peak of Inflated Expectations" in Gartner's 2024 Hype Cycle for Emerging Technologies. This is the phase where vendor promises significantly exceed demonstrated capability, and where the gap between marketing claims and production reality is widest. Large language models are expected to enter the "Trough of Disillusionment" through 2025–2026, where many organisations will report disappointment with ROI and deployment complexity.

This positioning does not mean AI lacks value. It means that the current market conversation overstates what AI can deliver in the near term. Agentic AI and autonomous systems remain five or more years from mainstream adoption despite vendor marketing suggesting near-term feasibility. Meanwhile, AI-powered analytics and narrow automation are moving toward the "Slope of Enlightenment" — genuine practical applications emerging after early disappointment.

For UK business leaders, the practical implication is timing and expectation management. Organisations investing in AI now should expect longer timelines, higher costs, and more modest initial returns than vendor projections suggest. The organisations that succeed are those that plan for the trough rather than budgeting for the peak.

The evidence on AI project failure is consistently sobering. McKinsey's 2024 AI Survey of 2,500 organisations found that 60% of AI implementations failed to move beyond pilot phases or fell significantly short of initial ROI targets. Only 20% of AI investments scaled beyond proof-of-concept within two years. The median time to realise measurable value was 18–24 months — substantially longer than the 6–12 months vendors typically project.

Gartner's 2024 CIO Survey reinforced these findings: 50% of AI projects were cancelled or paused before completion, with average cost overruns of 35–50% beyond initial budgets. The root causes are remarkably consistent across failed projects.

| Failure Factor | % of Failed Projects | Primary Consequence |

|---|---|---|

| Poor data quality | 68% | Model accuracy 15–30% lower than expected due to incomplete or biased training data |

| Unrealistic expectations | 62% | Scope creep and pivot required 6–12 months into deployment |

| Insufficient change management | 59% | Model performs well but users do not adopt it; deflection rates collapse |

| No clear ROI metric defined | 57% | Project success unmeasurable; funding continues despite lack of benefit |

| Skills and talent gaps | 54% | Vendor lock-in; expensive external consultants; ongoing high costs |

Source: Deloitte Global AI Impact Report 2024

The critical insight is that AI project failure is overwhelmingly an organisational problem, not a technology problem. Data preparation typically consumes 40–60% of project duration. Change management is chronically underestimated. Integration with legacy systems — 70% of UK enterprises operate on core systems over 10 years old — adds substantial timeline and cost that vendors rarely include in their projections.

The gap between vendor marketing and production reality follows predictable patterns. Understanding these patterns is the most effective defence against overspending on AI initiatives that cannot deliver their stated benefits within the stated timeline.

| Vendor Promise | Claimed Timeline | Realistic Timeline | What to Ask Instead |

|---|---|---|---|

| "90%+ accuracy guaranteed" | Immediate | Varies by data | "What accuracy do you achieve on YOUR clients' data? Provide 3 references." |

| "Plug and play in 4–8 weeks" | 4–8 weeks | 16–24 weeks | "Show me your average deployment timeline in months, not weeks." |

| "40–50% cost savings Year 1" | Year 1 | Year 2–3 | "What is your median Year 1 ROI excluding indirect benefits?" |

| "Replaces your entire team" | 6–12 months | Rarely happens | "How many FTE does your average customer actually reduce?" |

Source: Gartner Vendor Risk Assessment 2024

The "AI Will Think for You" Myth

Common misconception: Large language models understand context and reason through problems like humans.

The reality: LLMs perform sophisticated pattern matching, not reasoning. They hallucinate with high confidence — generating plausible but false information on 5–15% of factual queries even in the latest models. Small changes in how questions are phrased produce vastly different outputs, indicating brittleness rather than robust understanding. Every high-stakes AI output requires human review. No autonomous AI exists that can operate without oversight in regulated or mission-critical domains.

Proven AI use cases share common characteristics: they target high-volume, repetitive tasks with clear success metrics and maintain human oversight for edge cases and quality assurance. The use cases below have documented ROI data from multiple organisations — not vendor marketing projections, but measured outcomes from production deployments.

Customer Service Automation — Realistic: 25–35% Deflection

Industry average deflection rates are 25–35%, not the 60–80% vendors project. Top-quartile performers reach 45–55%, but only with mature knowledge bases and strong change management. A UK financial services firm achieved 38% deflection after 18 months (target was 45%), with Year 1 ROI of just 12% — improving to 35–40% in Year 2 as the system matured. Budget for 18-month breakeven, not 6 months.

Document Processing — Realistic: 65–95% Accuracy (Varies by Type)

Structured documents (invoices, forms) achieve 94–97% accuracy with 65–75% time savings. Unstructured documents (contracts, correspondence) drop to 65–82% accuracy with 20–45% time savings. A UK insurance firm processing 8,000 claims monthly achieved 91% accuracy (versus 98% human baseline) with 58% time savings and Year 1 ROI of 35–40%. Always budget for a 5–10% human review layer.

Content Generation — Realistic: 25–35% Net Productivity Gain

Content creators report 40–50% time savings on first-draft generation, but 20–30% of generated content requires extensive rework. Net productivity gain is 25–35%, not the 50–70% vendors claim. Works best for high-volume, lower-stakes content with defined brand guidelines. A UK media company achieved 30% net productivity gain and positive ROI by month 8.

Predictive Analytics — Where AI Genuinely Outperforms

AI significantly outperforms traditional methods in fraud detection (8–13% improvement), equipment failure prediction (8–15%), and medical imaging (7–13%). However, for structured data prediction and time series forecasting, traditional machine learning outperforms LLMs on cost, speed, and interpretability in 40% of evaluated use cases. If your problem involves structured historical data with clear feature relationships, simpler solutions often work better.

Sources: McKinsey AI Implementation Study 2024, Deloitte Document AI Study 2024, Content Strategy Institute 2024

Helium42 conducts independent AI readiness assessments that separate viable use cases from vendor hype. Explore our AI consultancy services.

Request an AI Reality CheckAI costs consistently exceed vendor estimates by 27–53%. The pattern is predictable: software licensing comes in on budget, but infrastructure, personnel, change management, and unplanned scope creep push total cost of ownership well beyond initial projections. Understanding where overruns occur allows organisations to build realistic budgets from the outset.

| Budget Category | Vendor Estimate | Actual Average | Why It Overruns |

|---|---|---|---|

| Software / licensing | 25% of budget | 20–25% | Often comes in on budget or slightly under |

| Infrastructure (cloud, compute) | 15% of budget | 25–35% | Compute needs 2–3× higher than estimated; storage grows |

| Personnel (internal + consulting) | 35% of budget | 45–55% | Project timeline extends; people work longer than planned |

| Change management / training | 5–10% of budget | 10–15% | Users need more support than anticipated |

| Contingency | 0% (rarely budgeted) | 10–15% | Unplanned delays and scope creep |

Source: Gartner 2024

For a £1 million AI project, realistic total costs typically land between £1.25 million and £1.5 million — a 27–53% overrun. The practical recommendation is to budget 1.5× the vendor estimate, allocate 15–20% of total project cost to change management (not 5%), and reserve 10–15% contingency for unplanned work.

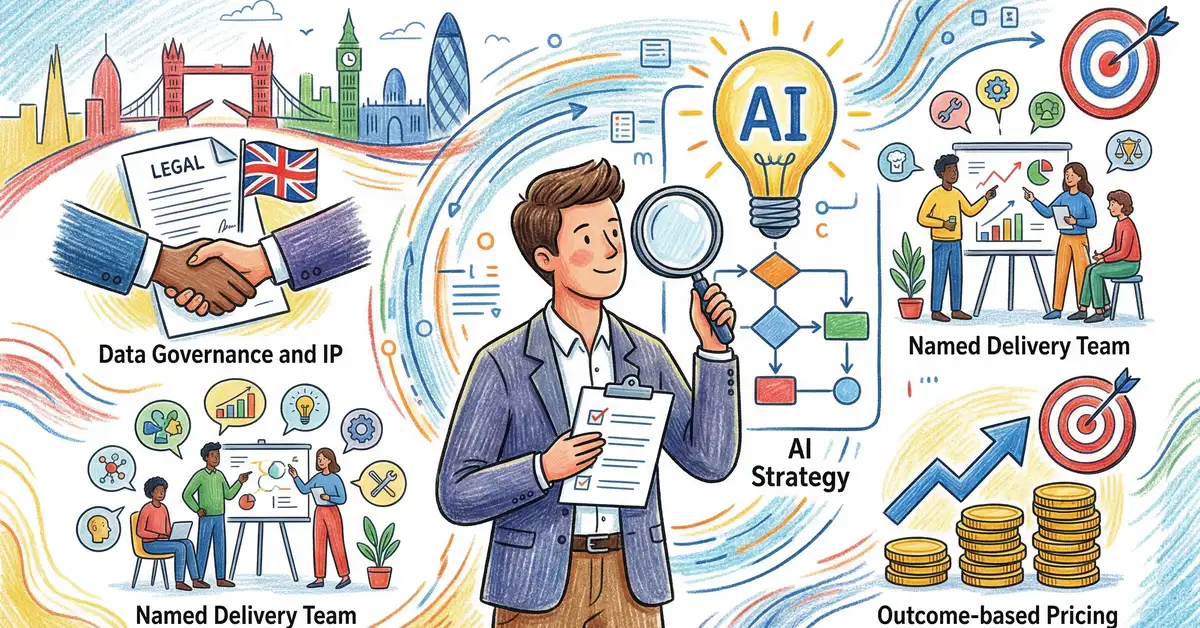

The most effective framework for evaluating AI opportunities is to ask questions that vendors find uncomfortable to answer. Every question below was identified by Gartner's 2024 Vendor Risk Management guidance as a question that differentiates informed buyers from easy targets.

On ROI and References

Ask to speak with customers in your industry with your problem size. Ask what percentage of customers achieve the ROI targets in the vendor's case studies. Ask for the median payback period and what proportion of customers have not achieved positive ROI by month 18.

On Limitations and Governance

Ask for the production hallucination rate — the actual number, not a marketing figure. Ask where human review is still required. Ask what happens when the model encounters data very different from its training data. Ask where your data is stored, whether you can restrict sensitive data, and what happens to your customisations after the contract ends.

The rule of thumb from McKinsey's 2024 analysis is straightforward: if your problem involves structured, historical data with clear feature relationships, traditional machine learning or simple rule-based systems will outperform LLMs on cost, speed, and interpretability. LLMs excel with unstructured data, natural language processing, and novel domain adaptation — but they are not the right tool for every AI problem.

UK AI adoption reached 35% among large enterprises in 2024, but only 8% reported "mature" AI implementation with established governance and ROI measurement. Twenty-two percent of mid-market organisations have deployed AI, and 8% of small businesses. More than 50% of AI projects across all segments remain in pilot stage, not yet reaching production scale.

The UK government's position emphasises "pro-innovation" regulation with a lighter touch than the EU's AI Act. However, sector-specific regulators are tightening requirements. The FCA requires AI Impact Assessments for financial services firms. The ICO has published specific LLM data protection guidance. The MHRA regulates AI-generated clinical advice as a medical device. For UK organisations, compliance is not optional — and compliance costs add 20–40% to implementation budgets in regulated sectors.

Research from Gartner and McKinsey consistently shows that 60–70% of enterprise AI projects fail or significantly underdeliver against initial projections. The primary causes are poor data quality (68% of failures), unrealistic expectations (62%), and insufficient change management (59%). Only 20% of AI investments scale beyond proof-of-concept within two years.

Generative AI is currently at the "Peak of Inflated Expectations" in Gartner's 2024 Hype Cycle. This means vendor promises significantly exceed demonstrated capability. AI delivers genuine value in specific, well-defined use cases — customer service automation, document processing, predictive analytics — but the current market conversation overstates what AI can deliver in the near term. Organisations that succeed are those that plan for realistic timelines and modest initial returns.

The median time to realise measurable value from AI is 18–24 months, significantly longer than the 6–12 months vendors typically project. Customer service automation and content generation can reach breakeven in 8–18 months. Predictive maintenance achieves payback in 8–14 months. Strategic capability programmes require 24 months or more. Budget for 1.5× the vendor's projected timeline.

AI is not the right tool when the problem involves structured, historical data with clear feature relationships — traditional machine learning outperforms LLMs in 40% of evaluated use cases and costs 3–10× less. AI also fails when the use case requires near-zero error rates (hallucination rates remain 5–15%), when data quality is poor (the most common failure factor), or when change management investment is insufficient to drive adoption.

Budget 1.5× the vendor estimate to account for typical cost overruns of 27–53%. For mid-market UK organisations, realistic annual costs range from £60,000 to £500,000 depending on the number of use cases and deployment complexity. Allocate 15–20% of total budget to change management, budget infrastructure costs at 1.5–2× initial estimates, and reserve 10–15% contingency for unplanned work.

Want an Honest Assessment Before You Invest?

Helium42 conducts independent AI readiness assessments that separate viable opportunities from expensive experiments. Evidence-based evaluation, not vendor-aligned recommendations.

For further guidance on making informed AI investment decisions, explore Helium42's related guides: Introduction to Large Language Models for Business explains what LLMs are and where they deliver value. The Business Case for AI: ROI, Timeline and Budget Planning provides the financial framework for board-ready investment cases. The AI Transformation Playbook covers the organisational change management required for successful adoption. AI Governance and Ethics Framework addresses compliance and risk structures. AI Compliance for Regulated Industries covers sector-specific requirements for financial services and healthcare.

Sources: Gartner AI Hype Cycle 2024, McKinsey State of AI 2024, PwC UK AI Report 2024, Deloitte Global AI Impact Report 2024, UK Government Office for AI 2024, ICO AI Guidance 2024, FCA AI Guidance 2023

Peter Vogel

Founder and CEO, Helium42

Peter Vogel leads Helium42's AI consultancy practice, helping UK organisations evaluate AI opportunities with independent, evidence-based assessments. With deep expertise in enterprise AI strategy, implementation governance, and operational transformation, Peter advises business leaders who want clarity on what AI can genuinely deliver before committing investment.

Fifty-two per cent of UK tech leaders now cite AI as their most difficult role to fill — a 114% increase in twelve months. Yet 61% of UK...

Seventy-eight per cent of organisations have adopted AI in some form. Only one per cent have reached maturity. The gap between pilot and...

You are about to invest six to twelve months and thousands of pounds into an AI implementation. Yet 61% of AI consulting engagements result in...