AI for HR and Recruitment: How UK Businesses Are Transforming People Management

Artificial intelligence is transforming how UK organisations recruit, develop, and retain talent. According to the Hays survey covering 46,000...

12 min read

Peter Vogel

:

Updated on March 22, 2026

Peter Vogel

:

Updated on March 22, 2026

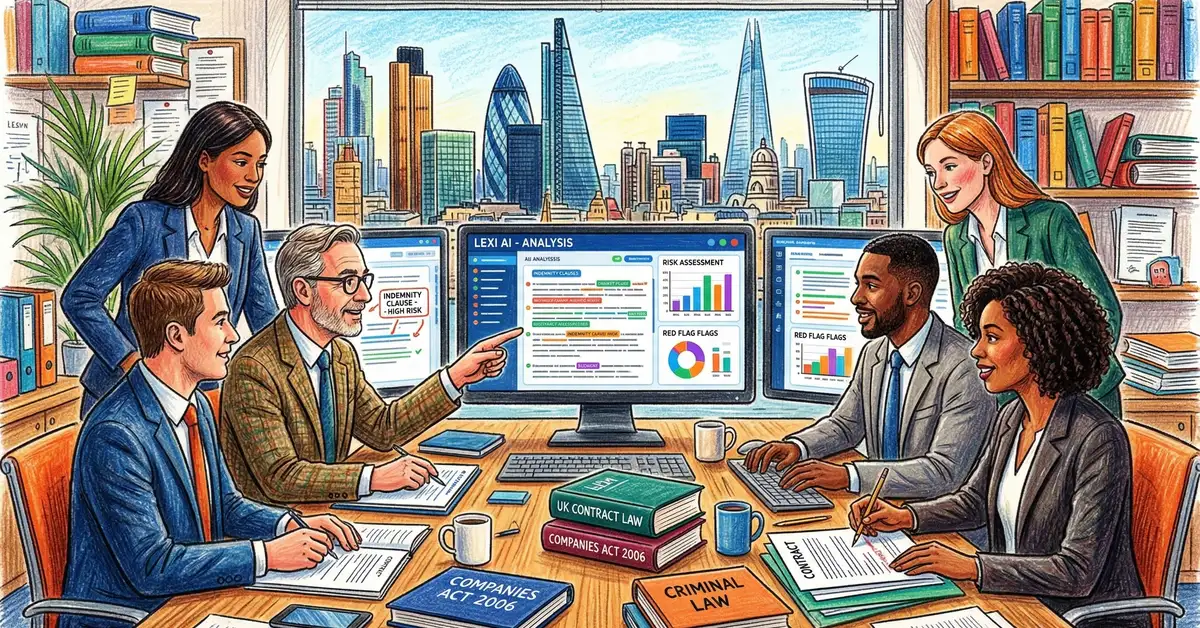

Artificial intelligence in legal practice refers to software systems trained on legal documents, case law, and regulatory frameworks that automate document analysis, research, contract review, and administrative tasks. These systems encompass general-purpose tools such as ChatGPT and Claude alongside legal-specific platforms including Luminance, Harvey, and Thomson Reuters CoCounsel, each offering distinct capabilities and risk profiles. AI for legal departments enables teams to process vast document volumes in hours rather than days, recover billable time currently lost to administrative work, and reduce human error in compliance-sensitive tasks. The UK legal sector recognises this opportunity acutely: 87% of UK legal professionals predict significant AI impact within five years, substantially outpacing global peers at 79% and American colleagues at 75%.

Yet a critical implementation gap persists. Whilst 87% of UK legal professionals predict transformational AI impact within five years, only 38% expect transformational change within their own organisations this year. This divergence between individual lawyer optimism and firm-level implementation reveals both opportunity and risk. Firms that bridge this gap strategically will gain competitive advantage, whilst those that delay risk losing client confidence to increasingly AI-enabled competitors.

UK law firms are deploying AI across five primary workflow categories: document review and due diligence; legal research acceleration; contract analysis and clause extraction; client intake automation; and time tracking and billing compliance. Enterprise-scale firms lead adoption rates, with 75% of UK top 20 firms actively promoting AI capabilities to clients and deploying both third-party solutions (65%) and proprietary in-house tools (35%). These firms leverage dedicated AI specialists and substantial capital investment to implement advanced applications including contract analysis, predictive litigation analytics, and AI-driven client advisories. Mid-market firms follow at 45% adoption, typically prioritising cost-effective solutions such as cloud-based practice management systems and document automation software. Most remarkably, 93% of mid-sized UK law firms now use AI in at least one workflow, reflecting rapid market-wide penetration within a relatively short timeframe.

The most common implementation pattern focuses on document review and due diligence workflows, where AI tools process contracts and supporting materials to extract clauses, assess risk profiles, and verify compliance against templates and regulations. In-house corporate legal teams have moved decisively beyond pilot phase, with 50% of UK corporate legal departments now expecting transformational change within their operations this year. This in-house adoption creates mounting pressure on external counsel, as corporate clients increasingly expect law firm capabilities to match their own internal AI implementations.

Implementation Reality Check

Document review consumes 60-80% of legal professional time across the sector. AI-powered solutions promise 50-90% reductions in review time, yet actual outcomes depend heavily on document quality, AI training specificity, and personnel capability to validate output before client delivery. Legal-specific AI tools substantially outperform general-purpose models at avoiding hallucinations and fabricated citations.

Organisations embracing strategic AI adoption are experiencing measurable productivity gains equivalent to 140-240 hours per lawyer annually, translating to approximately £12,000-£20,000+ per lawyer in time savings each year at current employment costs. Aggregated across the UK legal profession's approximately 200,000 solicitors, this equates to £2.4 billion in total productivity gains by 2026. These figures represent conservative near-term projections based on current technology capabilities and adoption rates. Looking forward, legal professionals anticipate these productivity gains to rise significantly within three years, with expectations of 240 hours of annual savings per lawyer and five-year projections reaching 370 hours annually—resulting in cumulative savings potentially exceeding £4 billion within three years and surpassing £6 billion within five years.

140-240

Hours saved per lawyer annually

Thomson Reuters Future of Professionals

£2.4B

UK sector productivity gains by 2026

Legal AI adoption analysis

36.9

Hours saved monthly by power users

Harvey adoption data

93%

Mid-sized UK firms using AI today

Current adoption benchmarks

The productivity gains observed thus far demonstrate both consistency and variance depending on the specific legal tasks being automated. In contracts and due diligence workflows—high-volume, high-value areas for most UK firms—documented improvements show document review time reductions of 60%, with some advanced deployments achieving up to 90% processing time reductions. Legal research workflows, traditionally consuming substantial attorney hours, now benefit from AI acceleration, with platforms capable of precedent discovery and case law analysis that previously required days now completing in minutes.

Research examining Harvey customers—a leading legal AI platform deployed across major firms and corporate legal departments—reveals that over two-thirds of organisations reported measurable benefits within three months of implementation, with more than one-third experiencing quantifiable gains within the first month. "Power users" of Harvey reported saving an estimated 36.9 hours per month on average, compared with 15.7 hours for standard users. Significantly, 100% of participating firms agreed or strongly agreed that lawyers would be upset or disappointed if Harvey access were removed following initial deployment, suggesting rapid integration into essential workflows and perceived value addition.

The market for legal-specific AI solutions has consolidated around several dominant platforms, each offering distinct technological approaches and pricing models. Luminance, developed by AI experts from the University of Cambridge and headquartered in the UK, operates a proprietary legal language model rather than relying on third-party large language models. The platform serves a customer base of 700 organisations across 70 countries with particular strength in M&A due diligence workflows, covering contract review, due diligence, and negotiation capabilities whilst integrating with Microsoft Word, Outlook, and Salesforce environments familiar to most legal professionals. In January 2026, Luminance announced a substantial platform upgrade introducing institutional memory capabilities, enabling the system to retain negotiation history and legal decision-making context across enterprise contract portfolios. The upgraded platform delivers contract negotiation time reductions from the previous 70-80% down to 90%, alongside providing legal teams with recovery of over 30% of available time through institutional knowledge capabilities.

Thomson Reuters CoCounsel, the rebranded version of CaseText acquired by Thomson Reuters in 2023 for £650 million, leverages OpenAI's GPT models to handle legal research, document review, deposition summaries, and contract analysis. CoCounsel integrates across Thomson Reuters' Westlaw and Practical Law products, providing breadth particularly valuable for firms already invested in Thomson Reuters research platforms. Thomson Reuters has committed over £200 million to CoCounsel rollout, signalling substantial investment in expanding AI capabilities across its legal product portfolio. Recent enhancements include Deep Research functionality employing agentic AI combined with Westlaw content to provide thorough legal research results, alongside guided agentic workflows suggesting optimal next steps for complex legal projects.

Evaluate AI Tools Strategically

Helium42 guides legal departments through AI vendor evaluation, implementation planning, and governance framework design. We help you assess tool fit against your firm's specific workflows, assess hallucination risks, and build internal competency before deployment.

Explore AI Implementation ServicesBeyond legal-specific platforms, many UK firms leverage general-purpose AI tools including ChatGPT, Claude, and Gemini for legal tasks, though regulatory guidance increasingly cautions against this approach without substantial protective measures. The appeal of general-purpose models lies partly in cost accessibility and partly in their flexibility across diverse tasks, but their deployment in legal contexts introduces significant risks. In November 2025 and early 2026, the UK recorded the 20th AI hallucination case in an employment tribunal involving fabricated legal citations, contributing to an aggregate UK total of 24 recorded hallucination incidents by end of November 2025. The global trend exceeds 700 documented cases by similar timeframe, indicating systematic problems with AI accuracy in legal contexts. The critical distinction between general-purpose and legal-specific AI tools centres on whether the system has been trained on legal datasets and fine-tuned to reduce hallucination risks specific to legal citations and case law references.

| Platform | Primary Strengths | Training Approach |

|---|---|---|

| Luminance | M&A due diligence, contract negotiation, institutional memory, low hallucination risk | Proprietary legal language model (University of Cambridge) |

| CoCounsel | Legal research depth, integration with Westlaw, deposition analysis | OpenAI GPT models with Thomson Reuters legal content grounding |

| Harvey | Contract analysis, time savings (36.9 hours/month power users), cross-practice versatility | Legal-specific fine-tuning on proprietary datasets |

| General models (ChatGPT, Claude) | Cost accessibility, flexibility, general knowledge breadth | General-purpose training; elevated hallucination risk in legal contexts (24 UK incidents to Nov 2025) |

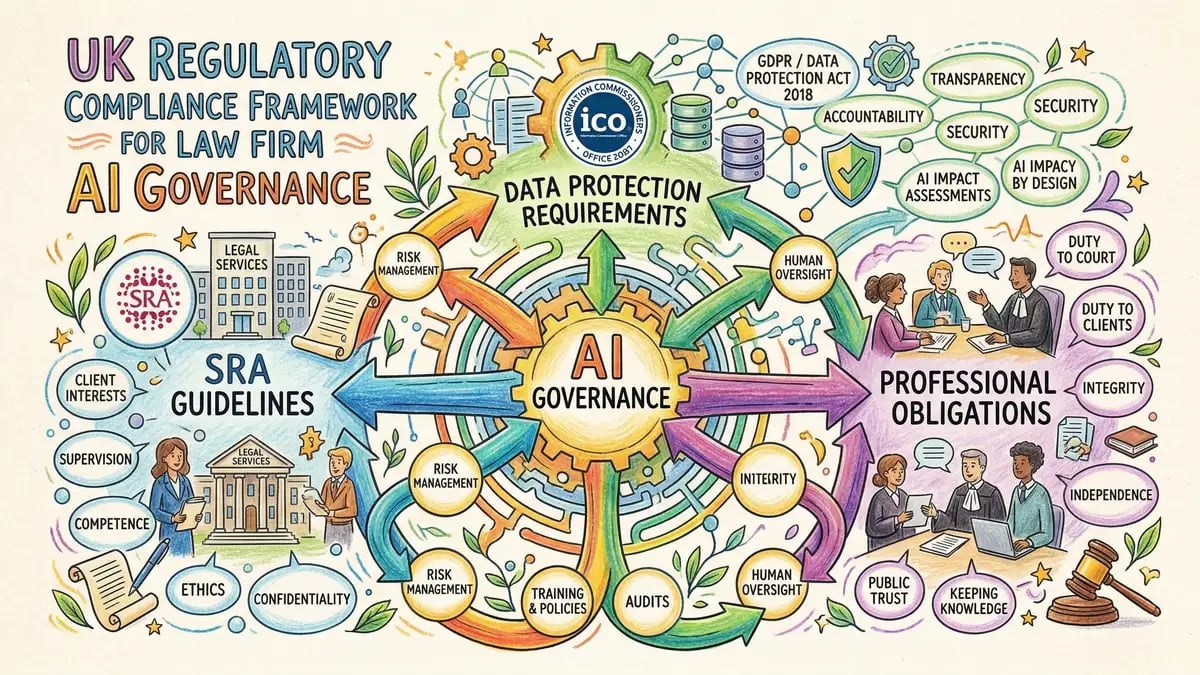

The Solicitors Regulation Authority (SRA) requires law firms to demonstrate governance, risk assessment, and training before deploying AI technologies in client-facing work. The SRA's principles-based approach requires solicitors to understand "the benefits and risks associated" with technologies used to deliver services, with duty of competence now explicitly including AI literacy. This regulatory framework places direct responsibility on managing partners and practice leaders to implement systematic controls rather than relying on tool vendors alone to ensure safe deployment. The SRA requires firms to maintain records demonstrating that AI tools have been evaluated against client data sensitivity, that staff have received appropriate training, and that output is subject to human review and validation before client delivery.

Critical regulatory boundaries exist around data protection and legal professional privilege. The Information Commissioner's Office (ICO) governs data handling requirements under UK GDPR, prohibiting transmission of client data to uncontrolled third-party systems without explicit client consent and documented safeguards. Crucially, the Upper Tribunal has ruled that uploading confidential documents to open AI tools (such as ChatGPT's free tier) constitutes waiving legal professional privilege, meaning that communication ceases to be privileged once uploaded. This principle extends beyond external tools to any AI system where the law firm does not maintain exclusive control over data retention and access. Additionally, only 10% of UK firms currently have formal AI governance policies in place despite 79% of legal professionals now using AI, creating significant regulatory exposure.

Privilege Waiver Risk

Uploading client confidential documents to general-purpose AI tools such as ChatGPT's free tier—even if flagged "confidential" by the user—waives legal professional privilege. The document becomes discoverable in litigation and cannot be withheld from opposing counsel. Exclusive use of legal-specific AI platforms deployed under firm control, or document redaction before general-purpose AI use, are essential risk mitigation measures.

| Requirement | What This Means | Compliance Action |

|---|---|---|

| SRA Competence | Solicitors must understand AI benefits and risks before deployment in client work | Formal AI training programme; documented competency assessment |

| Data Protection (ICO/GDPR) | Client data cannot be transmitted to uncontrolled third parties without explicit consent and documented safeguards | Data processing agreements with AI vendors; client notification in engagement letters |

| Privilege Protection | Uploading to open AI tools waives legal professional privilege; documents become discoverable | Use firm-controlled AI systems only; redact sensitive content before general-purpose AI use |

| Governance Policy | Firms must have formal AI governance policies; only 10% of firms currently have them despite 79% using AI | Develop formal AI policy covering vendor evaluation, staff training, output validation, and incident response |

Safe AI implementation requires systematic governance frameworks that establish clear authority, define permissible use cases, document vendor evaluation decisions, and implement mandatory output validation protocols. The most successful implementations follow a structured five-phase deployment pathway that begins with governance policy development, proceeds through pilot testing in low-risk workflows, expands to production deployment with enhanced controls, measures outcomes rigorously, and adjusts based on observed results. Law firms implementing AI strategically are 3.9 times more likely to experience return on investment than those adopting tools ad-hoc without planning, underscoring the importance of systematic approach over reactive tool adoption.

Establish Formal AI Governance Policy

Define responsible AI use across the firm, establish vendor evaluation criteria, require documented approval for all AI deployments, mandate staff training before tool access, establish output validation requirements, and create incident response protocols for hallucinations or data breaches.

Conduct Rigorous Vendor Evaluation

Test candidate tools on dummy datasets before any real client data exposure. Evaluate hallucination rates by running standard test cases and comparing outputs to verified sources. Assess data retention and privacy policies explicitly. For enterprise deployments, require contractual commitments regarding data non-retention, confidentiality, and compliance with UK GDPR and ICO guidance.

Implement Pilot Phase with Low-Risk Workflows

Begin with internal work (research, training materials) rather than client deliverables. Limit pilot to a single practice area or matter type. Require 100% output validation before any use. Document issues, track time savings, and measure quality metrics. Establish clear go/no-go decision point after 30-60 days based on measured results.

Deliver Formal AI Training Before Production Deployment

Yet less than half of legal organisations provide AI training despite 26% using generative AI. CILEX's AI Academy delivers personalised micro-learning through bite-sized sessions rather than lengthy programmes, cutting training time by 60-80%. Ensure training covers tool capabilities, known limitations, hallucination risks, data protection obligations, and privilege implications before staff deploy tools with client data.

Measure Outcomes Rigorously and Adjust

Document productivity gains in hours saved per matter or per lawyer. Track quality metrics (error rates, client satisfaction). Monitor cost per use and compare against business case projections. Conduct quarterly reviews and adjust deployment scope, training, or tool selection based on evidence rather than assumption. Firms with visible AI strategies are 3.9 times more likely to experience ROI.

The market-wide adoption of AI among UK legal professionals has outpaced formal training and competency development, creating significant skills gaps. Research shows 79% of legal professionals now use AI, up from just 19% in 2023, yet only 10% of firms have formal AI governance policies in place, creating a dangerous gap between adoption and oversight. This divergence means that most legal professionals are deploying AI tools without systematic training on risk management, hallucination mitigation, or data protection obligations—exposing firms to ethical violations, malpractice claims, and client trust issues.

The UK legal profession is developing multiple pathways for AI competency development. CILEX's AI Academy represents one structured approach, delivering personalised micro-learning through bite-sized sessions rather than traditional lengthy programmes. The platform addresses the practical reality that busy legal professionals struggle to find time for extended training, instead bringing learning to the flow of work through TikTok-style interfaces integrating with existing HR tech stacks and internal communications. The approach cuts traditional training programmes by 60-80% through focus on essential concepts rather than comprehensive curricula. The platform drives measurable results by accelerating training up to five times whilst boosting engagement through personalised learning feeds and gamified leaderboards.

Key Takeaway

Competent AI deployment requires three layers of skill development: tool fluency (how to use specific platforms effectively), risk awareness (understanding hallucinations, privilege waivers, and data protection), and strategic thinking (evaluating which problems AI genuinely solves versus where human judgment remains essential). Firms prioritising training and governance see 3.9x higher ROI than those deploying tools ad-hoc, making competency investment directly economically justified.

Essential competencies for legal AI users include:

What is the difference between general-purpose and legal-specific AI tools?

General-purpose tools such as ChatGPT are trained on broad internet data and demonstrate higher hallucination rates in legal contexts—24 UK incidents documented by November 2025 from general-purpose tools, part of a global total exceeding 700 cases. Legal-specific tools such as Luminance or Harvey are trained on legal datasets and fine-tuned to reduce hallucination risks in citations and case law references. The risk profile differs materially: general-purpose tools require heavy output validation before client delivery; legal-specific tools reduce but do not eliminate hallucination risk. Privilege implications also differ: uploading to open tools waives privilege; firm-controlled systems maintain privilege if the firm retains exclusive access and control.

How much time can we realistically expect to save?

Conservative estimates show 140-240 hours per lawyer annually from properly implemented AI tools, translating to approximately £12,000-£20,000+ per lawyer in time savings. More intensive deployments (power users of platforms such as Harvey) report 36.9 hours saved monthly, compared to 15.7 hours for standard users. A mid-sized firm of 50 lawyers might recover 240 hours monthly across the team on contract review tasks alone, worth £60,000 monthly (£720,000 annually) at £250 per hour blended rate. However, these gains depend on document volume, task complexity, firm readiness to change workflows, and ability to redirect saved time to higher-value work rather than reducing headcount.

What are the key regulatory risks we should understand?

Primary risks include: uploading to open AI tools waiving legal professional privilege (Upper Tribunal ruling); transmission of client data to uncontrolled vendors without explicit consent violating GDPR (ICO guidance); staff lacking AI competence creating SRA duty of care violations; hallucination generating fabricated citations submitted to court; and absence of formal governance policy creating professional conduct liability. Only 10% of UK firms currently have formal AI governance policies despite 79% of legal professionals using AI, creating material regulatory exposure. The SRA requires demonstrated competence, risk assessment, training, and governance before AI deployment.

How do we choose the right AI tool for our firm?

Vendor selection should prioritise: hallucination rate testing on legal datasets (request specific data from vendors); data retention and privacy commitments (require contractual guarantees); integration with existing workflows (tools such as CoCounsel with Westlaw integration add value to Thomson Reuters users); specific practice area strength (Luminance excels in M&A; Harvey offers broader practice area coverage); and cost structure relative to anticipated time savings. Conduct 30-60 day pilots with low-risk workflows before production deployment. Test on dummy datasets before any client data exposure. Request customer references from comparable firms and contact them directly.

What should our formal AI governance policy cover?

Essential policy components include: approved vendor list with documented evaluation basis; mandatory training requirements before tool access; explicit approval process for new AI tool adoption; data handling requirements (no transmission to uncontrolled third parties without client consent); output validation standards by practice area; hallucination response protocols; privilege protection requirements; documentation standards for governance decisions; incident response procedures; and periodic policy review (quarterly or annual). Assign clear accountability: usually a technology or risk partner responsible for maintaining policy, approving new tools, and monitoring compliance. Document all governance decisions and maintain records demonstrating SRA-required risk assessment and staff training.

How does AI impact our billing models and client expectations?

The £2.4 billion in potential UK legal sector productivity gains by 2026 represents fundamental business model challenge as AI upends traditional billable hour economics. Firms must decide whether to capture efficiency gains through pricing adjustments (maintaining billable hour rates whilst improving turnaround time) or through service delivery redesign (value-based pricing, fixed fees, increased matter volume). In-house corporate legal teams increasingly expect AI capabilities matching their own internal deployments, creating competitive pressure on external counsel. Firms that leverage AI to improve client service speed and quality whilst maintaining pricing discipline will strengthen profitability. Firms that neutralise productivity gains through pricing pressure from clients without offsetting cost reductions will face margin compression.

Develop Your AI Strategy with Helium42

AI for legal departments is no longer optional. Yet most UK firms lack formal governance, staff lack competency, and AI deployment happens ad-hoc rather than strategically. Helium42 guides legal leadership through AI vendor evaluation, governance framework design, implementation planning, and staff training to ensure your firm captures productivity gains whilst managing risks responsibly.

Start Your AI Implementation StrategyRelated Reading:

About the Author

Peter Vogel, Senior AI Strategist

Peter advises UK law firms and corporate legal departments on AI strategy, vendor selection, governance framework design, and staff training. He helps legal leaders understand regulatory requirements under the Solicitors Regulation Authority and Information Commissioner's Office, evaluate AI tools for specific practice areas, and implement systematic controls that capture productivity gains whilst managing hallucination and data protection risks.

Sources: Solicitors Regulation Authority (AI governance requirements); Thomson Reuters (Future of Professionals 2025, CoCounsel adoption data); Luminance (contract review time reductions, institutional memory capabilities); Information Commissioner's Office (UK GDPR requirements, data protection standards); Law Society of England and Wales (professional conduct standards); CILEX (AI Academy training framework, staff competency data); Harvey legal AI platform (adoption metrics, time savings data, user satisfaction research); Upper Tribunal ruling on legal professional privilege and AI tools; UK Employment Tribunal hallucination incident (November 2025); Global AI hallucination case tracking (700+ documented incidents); Antidote AI (billing compliance automation); Thomson Reuters CaseText acquisition ($650 million, 2023) and CoCounsel investment ($200 million); Clio vLex acquisition ($1 billion); Industry analysis of AI ROI in legal practice; 24 recorded UK AI hallucination incidents by November 2025.

Artificial intelligence is transforming how UK organisations recruit, develop, and retain talent. According to the Hays survey covering 46,000...

The artificial intelligence landscape is evolving at a dizzying pace, moving beyond theoretical possibilities to practical, real-world...

What Is AI for Legal Department AI adoption in HR and recruitments? Artificial intelligence in legal practice refers to software systems trained on...