Published by

Peter Vogel

Peter has guided over 500 organisations through AI transformation, with particular expertise in marketing and sales team enablement. His workshops have trained 2,000+ professionals in practical AI application, ...

The Complete Guide to AI Governance for UK Businesses

The Complete Guide to AI Governance for UK Businesses

Artificial intelligence is transforming how organisations operate. Yet according to recent research, only 1% of UK businesses describe their AI implementations as truly mature. Nearly 42% of organisations that invested in AI have abandoned those initiatives entirely, citing implementation failures, governance confusion, and uncontrolled risk exposure.

This is not a technology problem. It is a governance problem.

AI governance—the frameworks, policies, and control mechanisms that guide responsible AI deployment—is no longer optional. The EU AI Act enters full enforcement in August 2026. The UK Information Commissioner's Office is tightening guidance on algorithmic accountability. Your customers and stakeholders expect you to manage AI risk transparently. Yet most organisations are building AI systems without a coherent governance strategy, deploying first and hoping compliance catches up later.

This comprehensive guide walks you through the end-to-end governance framework that leading UK businesses are adopting. We cover the regulatory landscape, governance architectures, data governance essentials, risk management frameworks, and a practical implementation roadmap you can start this week. By the end, you will have the knowledge and tools to build AI systems your organisation—and your regulators—can trust.

What Is AI Governance and Why Does It Matter?

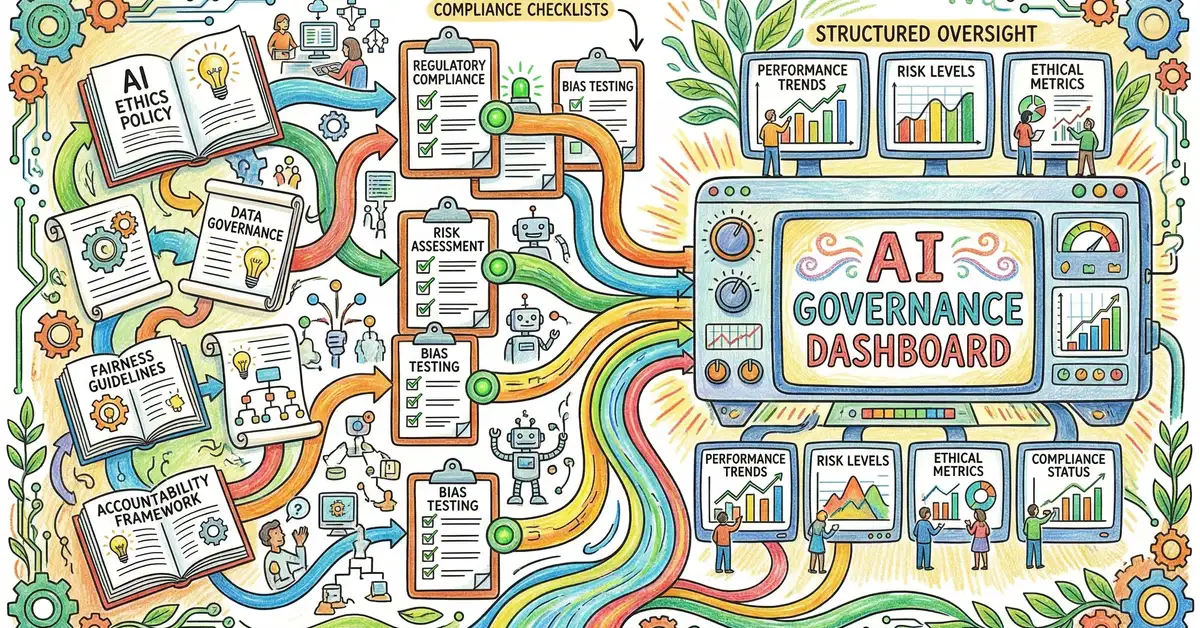

AI governance is the complete system of policies, processes, and people that ensure artificial intelligence systems are deployed responsibly, transparently, and in line with legal and ethical standards. It is not compliance theatre. It is not a document you write and file away. It is a living practice that touches development workflows, data pipelines, model training, deployment gates, and ongoing monitoring.

Traditional software governance often happens after deployment. You build the feature, ship it, then audit it. AI governance cannot work that way. AI systems learn from data. They adapt to patterns. They make decisions that affect people. Once an AI model is in production, retraining it or changing its behaviour can be complex and slow. Governance must begin before the first line of code.

The three pillars of effective AI governance are:

Risk Identification and Classification

Understand which AI systems carry material risk—to users, to your business, to regulatory compliance—and design controls proportionate to that risk.

Accountability and Transparency

Assign clear ownership of AI decisions. Document how models work, how they are trained, and who is responsible if something goes wrong.

Continuous Monitoring and Improvement

Measure model performance in production. Detect bias drift, accuracy decay, and unintended consequences. Fix issues before they become costly.

Organisations that embed governance from day one report 2.5 times higher ROI from their AI investments and significantly faster time to production. Why? Because governance reduces surprise failures, regulatory friction, and costly rework.

Key Takeaway

AI governance is not a compliance function bolted onto engineering teams. It is a shared responsibility—risk, legal, data, and product working together from ideation through production and beyond.

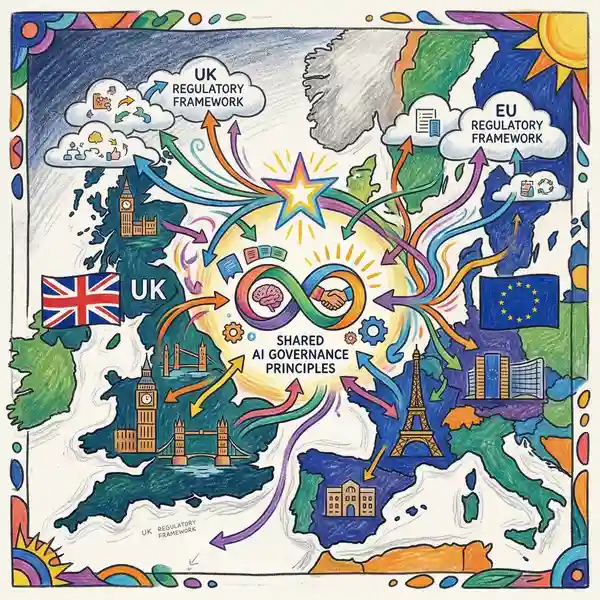

The UK and EU Regulatory Landscape: What You Need to Know

The regulatory environment for AI is evolving rapidly, and UK businesses face a dual challenge: understanding both the EU AI Act and the UK's own emerging framework.

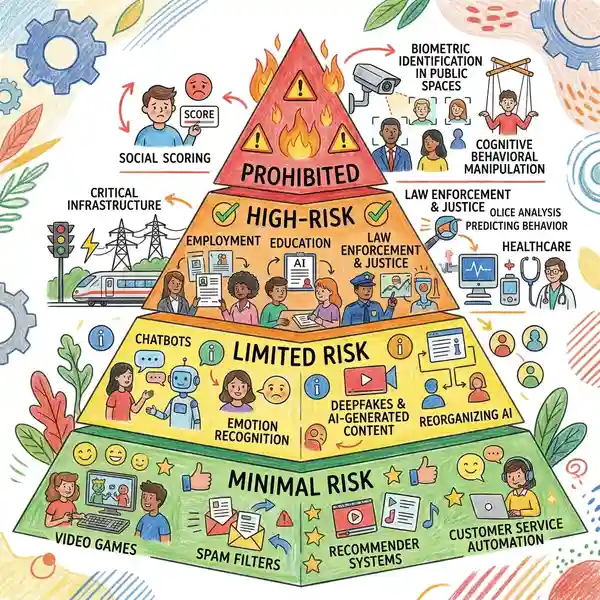

The EU AI Act

The EU AI Act introduces a risk-based classification system. Depending on the risk category, different rules apply. Prohibited AI systems (e.g., social credit scoring, real-time biometric surveillance) cannot be deployed at all. High-risk AI (used in recruitment, loan decisions, criminal sentencing recommendations, etc.) requires extensive testing, documentation, and human oversight before deployment. Limited-risk systems (like chatbots) need transparency disclosures. Minimal-risk systems face minimal regulatory burden.

The critical date for UK business: August 2026. By this date, most EU AI Act rules apply. If your organisation serves customers in the EU, sells services across borders, or operates a subsidiary in Europe, you must comply. The rule applies regardless of where your company is incorporated.

Additionally, the UK Information Commissioner's Office (ICO) published AI and data protection guidance emphasising data subject rights, algorithmic accountability, and bias detection. Unlike the EU Act's prescriptive risk tiers, the UK approach is principles-based: organisations must demonstrate they can explain decisions made by AI systems and show they are managing bias and discrimination risk.

The UK AI Governance Philosophy

The UK has deliberately chosen a different path. Rather than create a single new regulator or impose rigid risk classifications, the UK relies on existing sectoral regulators—the FCA for financial services, the CMA for competition and consumer protection, the ICO for data and privacy, and so on. Each regulator applies AI principles within their domain.

This creates both flexibility and complexity for business. You have more room to innovate within existing rules. But you also need to understand which regulators might scrutinise your AI systems and what their expectations are.

Regulatory Risk for Cross-Border Operations

Common mistake: Assuming UK-only regulation applies if your company is registered in the UK. The reality is that if you process data from EU customers or deploy AI systems in EU markets, the EU AI Act applies to you, even if you have no physical presence in Europe.

The consequence: Dual compliance frameworks. You must design your AI governance to meet both UK principles-based oversight AND EU risk-based classification. Helium42's UK-Germany base gives us direct experience navigating both systems.

AI Governance Frameworks: ISO 42001 and NIST

Rather than invent governance from scratch, your organisation should adopt proven frameworks. Two have emerged as industry standard: ISO/IEC 42001 and the NIST AI Risk Management Framework.

ISO/IEC 42001 (AI Management Systems)

ISO 42001 is the international standard for AI management systems. It covers the full lifecycle: planning, design, development, deployment, operation, and retirement of AI systems. The standard requires you to:

Establish an AI Governance Framework

Define roles, responsibilities, and decision-making authority. Who approves new AI projects? Who owns data quality? Who is accountable if something fails?

Document AI Systems and Their Purpose

Create an inventory of all AI systems in use. For each, document: what it does, what data it uses, what risks it carries, how it is monitored, and who is responsible.

Assess and Mitigate Risk

Evaluate each AI system for potential harms: bias, accuracy loss, security vulnerability, regulatory non-compliance. Design controls to reduce those risks.

Integrate Governance into Development Workflows

Embed risk checks into your CI/CD pipeline. Require model cards before deployment. Test for bias, drift, and adversarial robustness as part of your standard testing suite.

Monitor, Measure, and Iterate

In production, continuously measure model performance. Track metrics like accuracy, fairness, latency, and user satisfaction. If performance degrades, trigger retraining or manual review protocols.

NIST AI Risk Management Framework

Complementary to ISO 42001, the NIST AI Risk Management Framework focuses on identifying, measuring, and managing AI-specific risks throughout the system lifecycle. NIST defines risks in four categories:

| Risk Category | Definition and Examples |

|---|---|

| Performance Risk | AI systems that are inaccurate, unreliable, or unstable. Example: a fraud detection model that misclassifies legitimate transactions. |

| Security Risk | AI systems vulnerable to attack or adversarial manipulation. Example: a chatbot that can be prompted to leak confidential information. |

| Fairness and Bias Risk | AI systems that discriminate or treat groups unfairly. Example: a hiring algorithm that systematically disadvantages certain demographics. |

| Transparency and Accountability Risk | AI systems whose logic is opaque or decisions are difficult to explain. Example: a complex deep learning model with no interpretability tools, making it impossible to explain a decision to a regulator or customer. |

Source: NIST AI Risk Management Framework

Building Your AI Governance Operating Model

Effective governance requires structure. Many organisations try to bolt a governance function onto an existing engineering team, creating friction and slowing delivery. The best organisations instead embed governance into the development process itself.

A typical AI governance operating model has four layers:

Executive Steering

C-suite and board-level oversight. Defines AI ethics principles. Reviews high-risk projects. Approves governance policies. Ensures regulatory compliance.

AI Governance Office

Central team (data, legal, risk, product) that develops governance standards. Reviews AI projects for regulatory and ethical risk. Conducts bias and fairness audits. Maintains AI system inventory.

Embedded Teams

Data scientists, engineers, and domain experts building AI systems. Own data quality. Conduct impact assessments. Implement monitoring and retraining workflows. Answer to both product leadership and governance office.

The key principle: governance is not something separate that happens to your AI systems. It is something built in from day one.

Data Governance: The Foundation of Responsible AI

AI systems are only as good as the data they train on. Poor data leads to poor AI. Biased data leads to biased AI. Inaccurate data leads to inaccurate predictions. Thus, data governance is not separate from AI governance—it is foundational.

A robust data governance framework must address:

Data Provenance and Lineage

Where does the data come from? How is it collected? How many times has it been transformed? Who has access? An AI system trained on data of unknown origin is a black box within a black box.

Data Quality and Completeness

How complete is the dataset? What is the error rate? Are there missing values? Are measurements consistent? Quality issues in training data translate directly into model errors in production.

Data Fairness and Bias Detection

Is the training data representative of all groups the model will serve? Are certain demographics over- or under-represented? Biased training data leads to discriminatory AI decisions.

Privacy and Consent

Is the data collected with proper legal consent? Have you pseudonymised or anonymised sensitive attributes? Can data subjects exercise their rights (access, deletion, objection)?

The ICO's AI guidance specifically addresses data practices in AI systems. The core message: you are responsible for the data you use, even if it was collected by a third party or aggregated from multiple sources. Establish clear ownership and audit trails.

Risk Assessment and AI Impact Assessments

Before you deploy any AI system, you must assess the risks it poses. A bank deploying AI for credit decisions faces different risks than a marketing team using AI to optimise email send times. The assessment methodology must be proportionate to the use case.

An AI Impact Assessment (AIDA) is a structured process for evaluating AI system risk. It typically covers:

| Assessment Area | Key Questions |

|---|---|

| Use Case and Purpose | What decision or action does the AI system inform? Who is affected? What are the consequences of an incorrect decision? |

| Data and Bias | What is the training data? Are all relevant groups represented? Has the model been tested for disparate impact? |

| Accuracy and Fairness | What is the model's overall accuracy? Does accuracy vary significantly across demographic groups? What is the false positive rate? |

| Explainability | Can humans understand why the model made a specific prediction? Are there explanations available for regulatory or customer review? |

| Human Oversight | How much human review and approval is required before the AI recommendation becomes a decision? What are the override procedures? |

| Regulatory and Legal Risk | Does the use case fall under EU AI Act high-risk categories? What do relevant UK regulators (ICO, FCA, etc.) expect? Are there legal limits on how the decision can be made? |

Source: AI Impact Assessment framework adapted from the EU Article 29 Working Party and NIST AI Risk Management Framework

High-risk AI systems should be assessed formally before deployment and reviewed periodically in production. Lower-risk systems (like content recommendation) may use a lighter-weight assessment process.

Governance for Agentic AI: An Emerging Challenge

A new frontier has emerged in AI governance: agentic AI—AI systems that operate autonomously, taking actions in the real world with minimal human oversight. Examples include AI agents that autonomously place trades on behalf of fund managers, AI systems that automatically adjust manufacturing parameters, or customer service bots that independently authorise refunds.

Traditional AI governance frameworks assume humans remain in the loop. An agentic AI system that acts autonomously breaks that assumption. You cannot simply review every decision after the fact.

Governance for agentic AI requires:

Agent Inventory and Classification

Know every autonomous AI agent operating in your organisation. Document what decisions it can make, what actions it can take, what the financial or reputational impact would be if it goes wrong.

Tiered Autonomy Controls

Low-risk agents might act freely, but audit all actions weekly. Medium-risk agents require human pre-approval for actions above a threshold. High-risk agents require real-time monitoring and can be stopped instantly by a human operator.

Action Logging and Auditability

Every decision and action taken by an agent must be logged with full context: why the agent made that decision, what information was available, what guardrails applied. An immutable audit trail is not optional for agentic AI.

Clear Accountability

If an agentic AI system causes harm, someone is responsible. That person or team must be clearly identified. Accountability cannot be ambiguous or distributed across too many parties.

Organisations deploying agentic AI without this structure are operating blind. They have no visibility into what their agents are doing, no ability to understand why decisions were made, and no clear accountability if something goes wrong.

Ethical Considerations and Responsible AI Principles

Beyond regulatory compliance, leading organisations adopt explicit ethical principles for AI. These principles set the cultural and operational tone for how AI is developed and used.

Common ethical principles include:

Fairness

AI systems should not discriminate. All people should be treated fairly, regardless of protected characteristics like race, gender, or age.

Transparency

When AI makes decisions that affect people, they deserve to know and to understand why. Hidden algorithms erode trust.

Accountability

Someone must be responsible for the impact of an AI system. There must be a mechanism for recourse if that system causes harm.

Privacy

AI systems should respect personal privacy. Data should be collected and used only for stated purposes, with informed consent.

Security

AI systems should be protected against adversarial attack and unintended manipulation. Security is a fundamental ethical responsibility.

Human Autonomy

Humans must retain control over significant decisions. AI should augment human judgment, not replace it without meaningful human oversight.

Embedding these principles into your governance framework aligns technical compliance with broader organisational values. It also builds trust with customers, employees, and regulators.

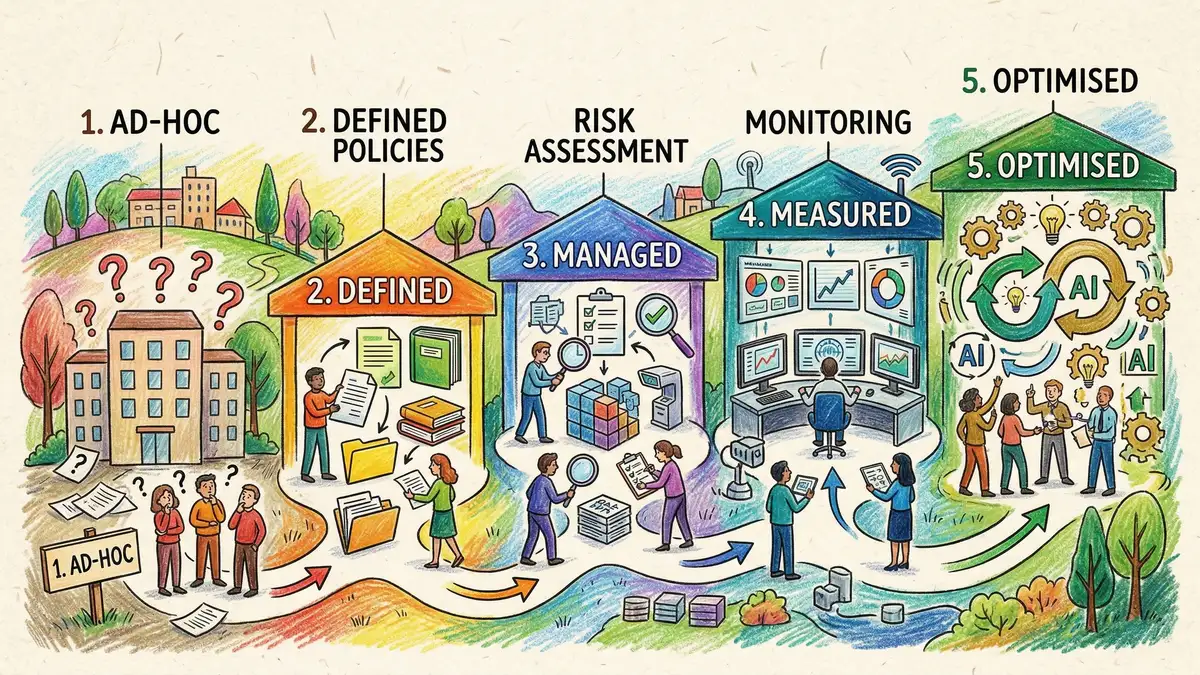

Practical Implementation Roadmap

The governance frameworks and principles discussed above are comprehensive, but where do you actually start? Here is a step-by-step roadmap you can implement over the next 6-12 months:

Weeks 1–2: Inventory and Assessment

Identify every AI system currently in use across your organisation. For each, answer: What does it do? Who uses it? What data does it process? Who owns it? Create a simple spreadsheet. This transparency is your foundation.

Weeks 3–6: Define Governance Framework

Create a draft governance policy. Define roles and responsibilities. Establish decision-making authority. Document how and when AI projects require approval. Draft a risk assessment template. Get buy-in from leadership and key stakeholders.

Weeks 7–12: Risk Assessment for Existing Systems

Using your risk template, conduct formal assessments for high-impact AI systems (those affecting financial decisions, customer outcomes, or regulatory exposure). Document findings and required mitigations.

Months 4–6: Implement Data Governance Controls

Establish data lineage and quality controls. Implement bias testing for high-risk models. Audit data for regulatory compliance (GDPR, UK Data Reform, ICO guidance). Train data teams on governance standards.

Months 7–9: Integrate Governance into Development Workflows

Add governance checkpoints to your development pipeline. Require risk assessments before model training. Implement automated bias and fairness testing. Establish monitoring and retraining schedules.

Months 10–12: Launch Monitoring and Continuous Improvement

Implement production monitoring dashboards. Measure model performance, fairness metrics, and drift. Establish escalation procedures for performance issues. Run quarterly governance reviews. Plan for ISO 42001 certification (optional but valuable).

This roadmap is progressive. You will not have perfect governance after month 12, but you will have a mature, defensible system that demonstrates genuine care for responsible AI deployment.

AI governance is not a burden for your business—it is a competitive advantage. Organisations with mature governance deploy AI faster, with lower risk, and higher user trust.

Explore Our AI Governance ServicesTools and Platforms for AI Governance

Implementing governance at scale requires tooling. No organisation manually reviews every model training run or maintains an AI system inventory in a spreadsheet. Here are the key categories of tools to evaluate:

Model Registry and Version Control

MLflow, DVC, Weights & Biases, Hugging Face Model Hub. Centrally manage all models, versions, metadata, and lineage. Ensure reproducibility.

Bias and Fairness Testing

Fairlearn, AI Fairness 360, Alibi Detect. Automatically test models for discriminatory bias and fairness regressions. Integrate into CI/CD pipelines.

Model Monitoring and Observability

WhyLabs, Arize, Datadog, New Relic. Continuously monitor model performance in production. Alert on drift, accuracy loss, and anomalies. Log decisions for audit trails.

Preparing for Regulatory Scrutiny

Regulators are increasingly scrutinising AI systems. The UK Information Commissioner's Office, the Financial Conduct Authority, and sectoral regulators have all signalled they will conduct AI audits and enforcement actions.

To prepare:

Document Everything

If a regulator asks "how do you ensure fairness in your AI systems?" you need evidence, not excuses. Maintain design documents, impact assessments, testing results, audit logs, and monitoring dashboards.

Establish Explainability

For high-impact decisions (credit, hiring, benefits eligibility, etc.), you must be able to explain why an AI system made a particular recommendation. Implement model explainability tools. Train teams on how to communicate AI decisions clearly.

Enable Third-Party Audits

Consider external AI audits by independent parties. This demonstrates good faith and can identify blind spots your internal team might miss. It also provides evidence to regulators that you take governance seriously.

Build Relationships with Regulators

If you are deploying high-risk AI (financial services, healthcare, recruitment), proactively engage with relevant regulators. Many publish guidance for businesses. Responding early and transparently to regulatory questions builds credibility.

Frequently Asked Questions

Does the EU AI Act apply to UK businesses?

Yes, if your organisation serves customers in the EU, processes data from EU residents, or has a subsidiary in Europe. The Act applies based on where the AI system is used, not where the company is incorporated. UK-only operations face different compliance obligations under the ICO's principles-based approach.

What is the difference between ISO 42001 and the NIST AI Risk Management Framework?

ISO 42001 is a management systems standard covering the full AI lifecycle. NIST is a risk management framework focused on identifying and mitigating AI-specific risks. They are complementary. ISO 42001 provides the structure and organisational context. NIST provides detailed risk assessment methodology. Many organisations implement both.

How do we measure AI fairness if there is no single "correct" definition?

Fairness is contextual. In hiring, you might measure whether the system selects equally qualified candidates across demographic groups. In lending, you might examine whether approval rates are similar across groups with equivalent credit risk. Work with domain experts, regulators, and affected communities to define fairness metrics appropriate to your use case. Then measure consistently and transparently.

Do we need human-in-the-loop review for every AI decision?

Not for every decision, but yes for high-impact decisions or those affecting vulnerable populations. A chatbot recommending products does not require human approval. An AI system recommending criminal sentences does. The governance framework must define, for each AI system, what level of human oversight is required. This is your risk assessment outcome.

How often should we retrain AI models?

It depends on the use case and the rate of data change. Some models drift significantly within weeks. Others remain stable for years. Implement production monitoring to detect drift and accuracy loss. When metrics degrade below acceptable thresholds, trigger retraining. The governance framework should define clear escalation procedures and retraining SLAs.

Can we use third-party AI models (like large language models) without building our own governance?

No. Even if you licence a third-party model, you are responsible for how it is used, what data it processes, and how it affects your customers. You still need to conduct impact assessments, test for bias, implement monitoring, and maintain an audit trail. Third-party models reduce engineering burden but not governance responsibility. The EU AI Act explicitly assigns liability to the organisation deploying the model.

What if we discover bias in a production AI system?

First, establish the scope: how many decisions were affected? What was the bias? Document everything. Then, depending on severity, either retrain the model immediately, implement additional human review, or temporarily disable the system pending investigation. Notify affected parties if required by law (ICO guidance, GDPR, etc.). Update your governance controls to prevent similar issues. This is not a failure—it is governance working as intended.

Getting Started: Next Steps

AI governance is not a one-time project. It is an ongoing practice that evolves as your AI systems mature and as regulatory requirements change. But you must start somewhere.

Here are the immediate next steps:

Audit Your Current AI Systems

Start this week. Create a simple inventory of every AI model, algorithm, and automated decision system in use. Include the use case, the data source, the owner, and any known risks. This inventory is your foundation.

Assess Your Regulatory Exposure

Which of your AI systems fall under the EU AI Act? Which are regulated by the FCA, ICO, or other UK regulators? Which affect vulnerable populations (children, elderly, financial hardship)? Rank by regulatory risk.

Define Governance Roles and Responsibilities

Form a cross-functional AI governance team: data, engineering, legal, product, risk. Define who owns what. Establish escalation paths. Get executive sponsorship. Without clear accountability, governance initiatives stall.

Get Expert Guidance

If your governance journey feels overwhelming, seek expert support. Helium42 specialises in AI governance frameworks, having navigated both UK and EU regulatory landscapes. We work with teams to design governance structures tailored to your business, implement controls in your development workflows, and prepare for regulatory scrutiny.

The organisations that invest in governance today will be the ones that deploy AI with confidence tomorrow. Governance is not a cost. It is an investment in your competitive advantage, your regulatory standing, and ultimately, your organisational resilience.

Ready to build AI governance into your organisation?

Helium42 combines education with implementation. We help UK and EU businesses establish governance frameworks that actually work, integrating control into your development workflows from day one.

Peter Vogel

AI Strategy Director, Helium42

Peter leads AI governance research and implementation projects across UK and EU markets. He advises boards and executive teams on responsible AI strategy, regulatory compliance, and building AI capability from education through to production deployment. Peter has deep experience in cross-border governance, having worked with both UK principles-based and EU prescriptive regulatory frameworks.

Sources: European Commission: EU AI Act Summary, ICO: AI and Data Protection Guidance, NIST AI Risk Management Framework, ISO/IEC 42001 Standard, OECD AI Framework, Alan Turing Institute Research on UK AI Governance

AI development pricing breakdown

generative AI development services

what AI governance means in practice

EU AI Act applicability to UK businesses

AI governance best practices for mid-market organisations

AI governance risk and compliance framework

governance frameworks for agentic AI systems